2024-09-18

Mobile ML: Fungi Classifier

87.5% accuracy classifier using DINOv2, optimized for CPU with <200ms latency.

Case Study: Developing a High-Precision Fungi Classifier for Mobile Deployment

This is a simulated consulting project where I evaluated the feasibility of a mobile application for classifying local fungi as edible or poisonous. Working from the perspective of an ML consultant, I selected and trained models, assessed their performance, and suggested practical strategies for deployment and integration.

The challenge was not just building a model; it was engineering a solution that could run reliably on modest mobile hardware while maintaining life-safety accuracy.

🚀 The Engineering Challenge: Constraints & Performance

Unlike laboratory AI projects, this required a solution designed for the "real world." I had to adhere to strict computational and operational constraints:

- Limited Hardware: Optimized for 4.0 CPU-only environments with a 6.0GB RAM limit.

- Operational Latency:

- Startup: Initialization and model setup in under 1 minute.

- Inference: Prediction return in under 200 milliseconds per image for a smooth UX.

- Data Integrity: Developed a custom training and validation pipeline from a dataset of 1,001 labeled images.

🛠️ My Technical Approach

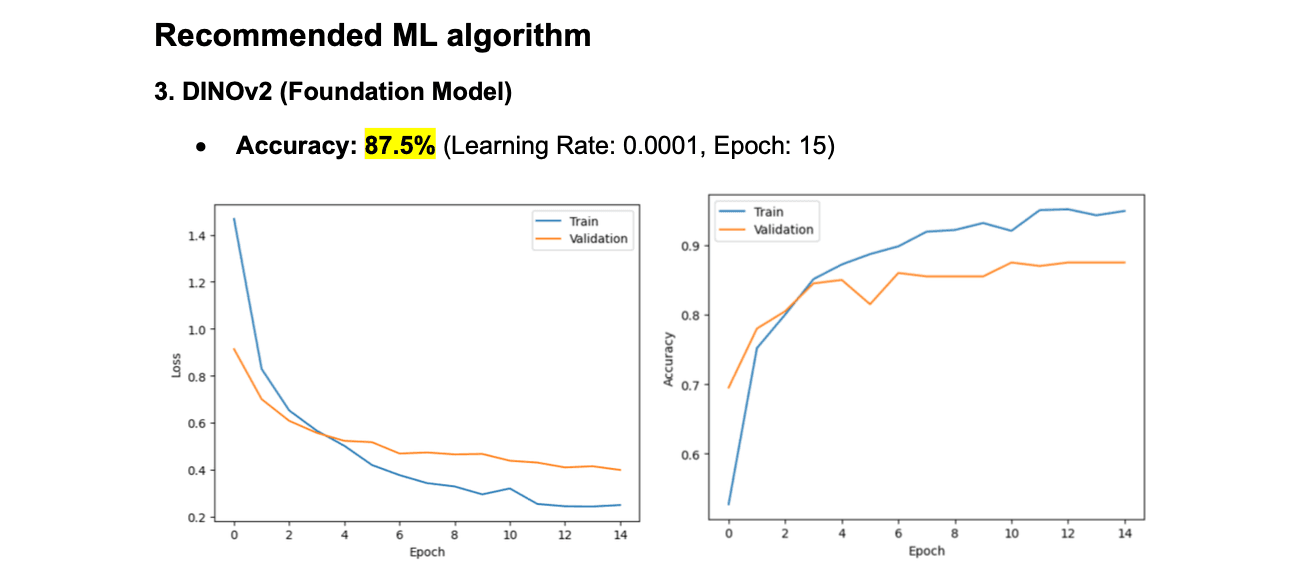

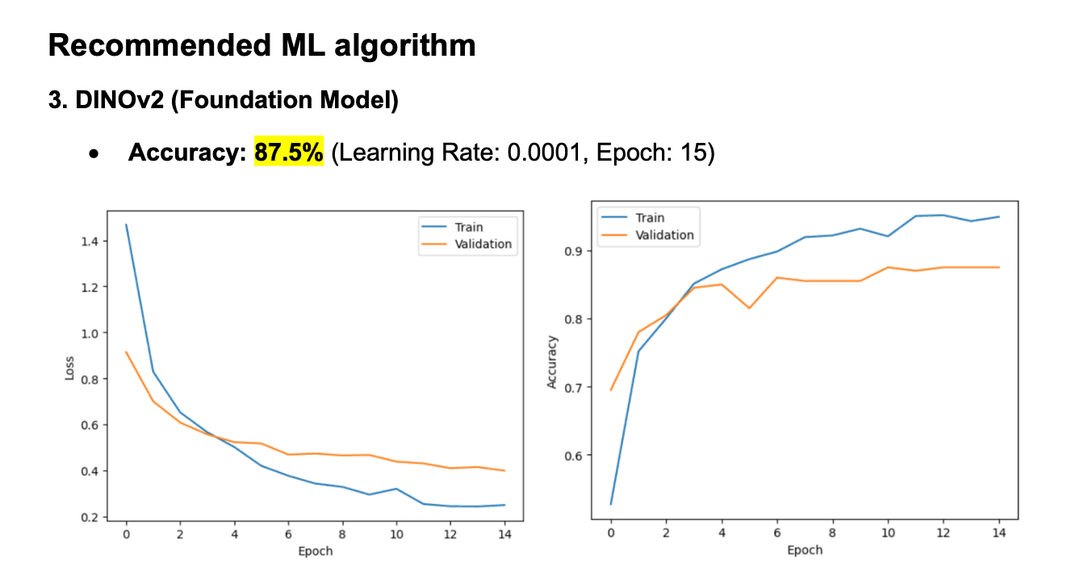

I explored three distinct architectures to find the optimal balance between speed and reliability:

- ResNet18 (Standard CNN): Achieved 74% accuracy.

- ResNet18 (Frozen Backbone): Faster training but lower performance at 66% accuracy.

- DINOv2 (Foundation Model): My recommended algorithm, delivering a significant performance jump to 87.5% accuracy.

Why DINOv2?

By utilizing a Vision Transformer-based foundation model, I was able to leverage self-supervised pre-training to achieve high precision without requiring a massive labeled dataset.

📈 Results & Key Achievements

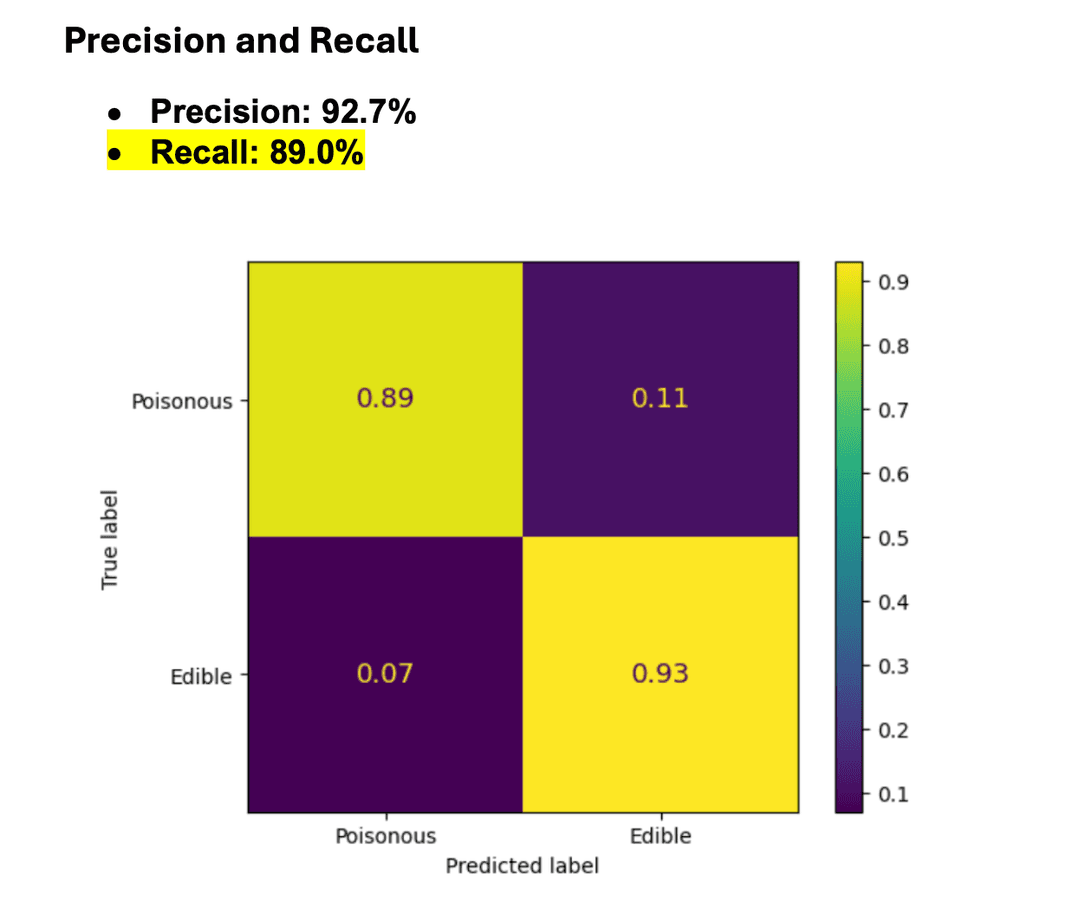

My final model (DINOv2) delivered metrics that balanced the high stakes of fungi identification:

| Metric | Result |

|---|---|

| Accuracy | 87.5% |

| Precision | 92.7% |

| Recall | 89.0% |

|  |

Handling Data Anomalies

The raw dataset included challenging "unusual data" like drawings, macro-crops, or distant photos. I implemented Data Augmentation (horizontal/vertical flips, rotation, and resizing) to improve robustness. Additionally, I addressed a Class Imbalance where certain species were overrepresented, ensuring the model remained unbiased.

🛡️ Ensuring Reliability & Safety

In software engineering, a model is only as good as its failure handling. I performed a Confidence Calibration analysis which revealed the model is "underconfident"—meaning it often performs better than its internal probability score suggests.

My Recommendations for Integration:

- Dynamic UI Guides: Recommended a mushroom-shaped camera overlay to help users frame shots correctly.

- Environmental Alerts: Logic to trigger "Too Dark" warnings or "One Fungi Per Photo" messages to reduce false negatives.

- Risk Transparency: App displays accuracy rates and clear warnings to ensure users treat the tool as a reference, not a safety guarantee.

💡 Reflection

This project demonstrated my ability to bridge the gap between complex ML research and practical software constraints. By prioritizing latency and resource management, I delivered a high-accuracy solution ready for mobile integration.

Key Skills Utilized:

- Python (PyTorch / PIL)

- Model Optimization for CPU-only environments

- Data Augmentation & Pipeline Engineering

- Reliability & Ethics in AI

TrebleCross: C# Game

A deep dive into building a strategic game engine using C# and .NET 8, featuring advanced design patterns and strategic gameplay logic.

Server-side Architecture

A comprehensive look at building a robust backend system using Node.js and TypeScript.